What is Cloud Computing

- Cloud computing is the delivery of computing resources — servers, storage, databases, networking, software — over the internet, on demand.

- Instead of buying and running physical hardware, you rent capacity from a cloud provider and pay only for what you use. The billing model is pay-as-you-go: think electricity, not a generator you own and maintain.

- The practical result: no upfront capital expense, no hardware lifecycle management, and the ability to go from zero to global infrastructure in minutes.

Cloud Service Models

Section titled “Cloud Service Models”Cloud computing services are divided into three main models, offering varying levels of control and management:

- Infrastructure as a Service (IaaS): The most basic layer of cloud services, providing raw infrastructure like networking, storage, and servers. The cloud vendor manages the underlying hardware, while the user is responsible for managing the operating system, middleware, runtime, data, and applications.

- Platform as a Service (PaaS): Provides a ready-to-use platform for developers to build, test, and deploy applications. The vendor manages the underlying infrastructure and operating systems, allowing developers to focus entirely on writing code without worrying about server provisioning.

- Software as a Service (SaaS): A complete, ready-to-use software application hosted and managed entirely by the vendor. The user only consumes the service over the internet and does not have to worry about maintenance, scaling, or underlying infrastructure. Example: Gmail.

Cloud Deployment Models

Section titled “Cloud Deployment Models”Organizations choose how their cloud resources are deployed based on security, budget, and access needs:

- Public Cloud: Services and resources are owned and operated by a third-party cloud provider and made available to anyone over the public internet. Generally the most cost-effective and easy-to-setup option.

- Private Cloud: The cloud infrastructure is maintained on a private network protected by firewalls, and resources are used exclusively by a single organization.

- Hybrid Cloud: A combination of public and private clouds. Allows organizations to host highly sensitive data on a private cloud while utilizing the public cloud for other applications to benefit from cost savings and scalability.

Scaling

Section titled “Scaling”Scaling is how an application handles a rapid increase in user traffic.

Vertical Scaling

Section titled “Vertical Scaling”- The traditional approach of adding more power to a single machine by upgrading its CPU, memory, or disk space.

- Downsides: Suffers from diminishing returns in cost (larger components become disproportionately expensive) and creates a single point of failure where the entire application goes down if that one machine fails.

Horizontal Scaling

Section titled “Horizontal Scaling”- A more modern approach where an application is cloned and hosted across multiple smaller, cheaper machines.

- Provides potentially lower costs and higher stability - if one machine fails, other instances continue to serve incoming traffic.

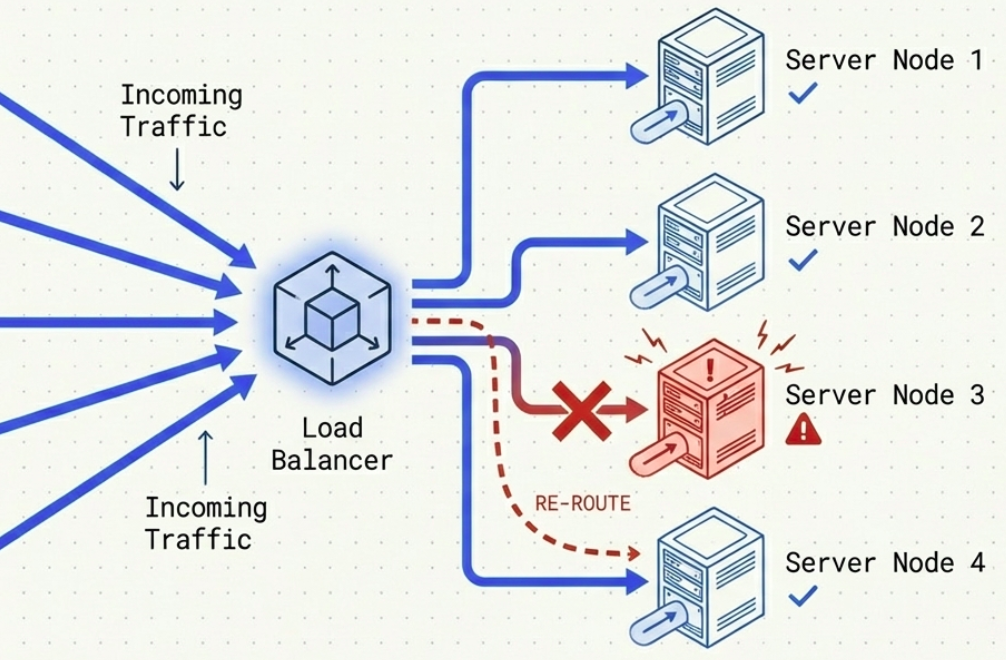

Load Balancing

Section titled “Load Balancing”

- When utilizing horizontal scaling, a load balancer sits in front of the application to distribute incoming traffic across available, healthy machines.

- Acts as a virtual network layer with its own DNS or IP address.

- Routes requests using various algorithms:

- Round Robin: Iterating through each available machine sequentially.

- Least Connections: Directing traffic to the machine handling the fewest active connections.

- Resource Utilization: Sending requests to the machine with the lowest CPU utilization.

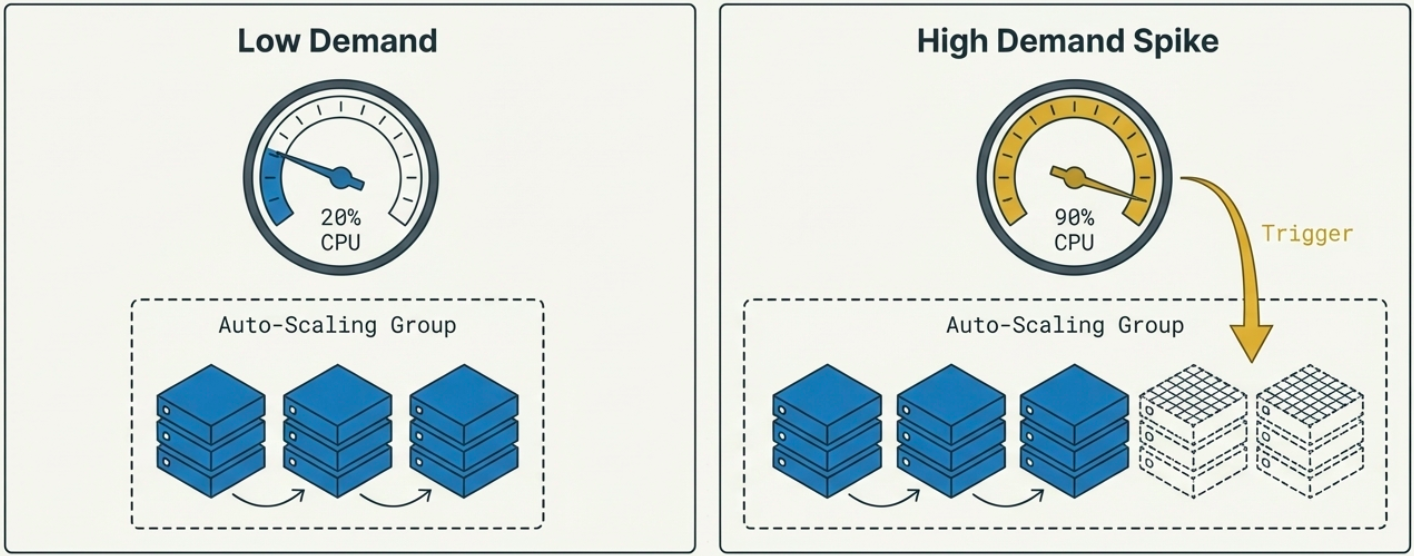

Autoscaling

Section titled “Autoscaling”

- The automated process of adding or removing instances in response to fluctuating user traffic or resource utilization.

- Configure thresholds (e.g., a specific number of connections or CPU utilization percentage) that trigger the cloud provider to spin up new machines during traffic spikes or remove them when traffic drops, saving money.

Serverless

Section titled “Serverless”The definition of “serverless” has evolved over time, becoming somewhat contentious.

- Original Definition (e.g., AWS Lambda): Developers write their code as “functions” and deploy it without ever provisioning or knowing about the underlying machines. The cloud provider automatically scales the infrastructure up or down, and the user strictly pays per execution or usage.

- Modern “Devolved” Definition (e.g., AWS OpenSearch Serverless): Cloud providers increasingly label services as serverless simply because the underlying instances scale automatically and do not require manual management. However, users are still billed pro-rata for the underlying infrastructure capacity rather than strictly for per-use execution.

Going Deeper: Architecture Concepts

Section titled “Going Deeper: Architecture Concepts”The rest of this page covers architectural patterns that cloud workloads use in practice. These build on the fundamentals above.

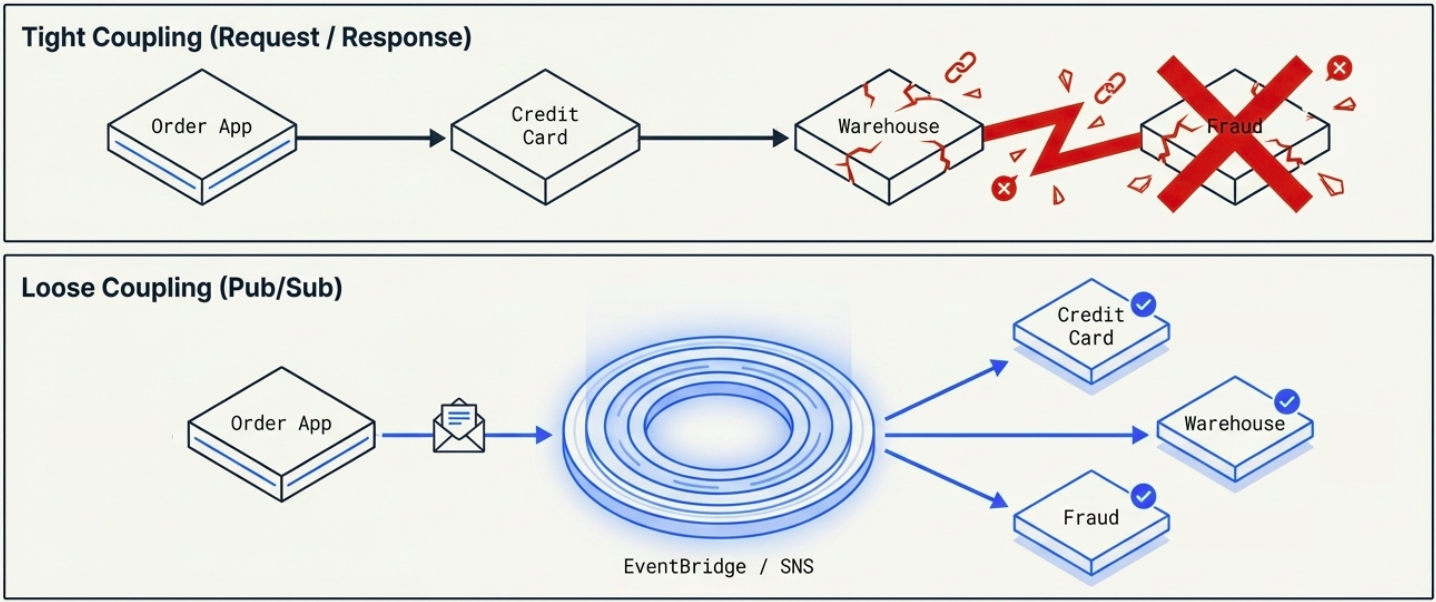

Event-Driven Architecture (EDA)

Section titled “Event-Driven Architecture (EDA)”A decoupled design model that solves the problems of traditional synchronous “request-response” architectures.

The Problem with Request-Response

Section titled “The Problem with Request-Response”- Creates tight coupling - if an order is placed, the core application must sequentially call the credit card service, fulfillment service, and fraud service.

- The central service must know about all downstream dependencies, making adding new features complex.

The EDA Solution (Pub-Sub)

Section titled “The EDA Solution (Pub-Sub)”- The core application (the Publisher) sends a generic notification “envelope” to a central engine (like AWS SNS or EventBridge).

- This engine “fans out” and distributes a copy of the message to any number of downstream services (Subscribers).

- The publisher never needs to know what services are listening.

- If a process needs to be reversed (like a fraud detection failure), the system simply publishes a new “order cancelled” event for downstreams to handle.

Container Orchestration

Section titled “Container Orchestration”- Containers package code, configurations, and dependencies into an isolated environment so the application can run identically anywhere, solving the “works on my machine” problem.

- Manually deploying, monitoring, and replacing failed containers on individual machines is difficult.

- Container orchestration services (like AWS ECS or Kubernetes/EKS) automate this by deploying containers across multiple machines, automatically provisioning load balancers, running health checks, and replacing failed instances.

Cloud Storage

Section titled “Cloud Storage”- Object Storage: A general-purpose store for unstructured data like media files (MP4s), JSON objects, and CSV files (e.g., Amazon S3). Users do not manage the underlying servers - they simply store and retrieve files from the cloud.

- Block Storage: Virtual hard drives (volumes) that can be attached to cloud instances. They can automatically scale up or down and be shared across multiple instances simultaneously.

- Databases: Includes relational databases (SQL, like Postgres or Oracle), NoSQL databases (like MongoDB or DynamoDB), and cache solutions which hold temporary data in memory for rapid application access.

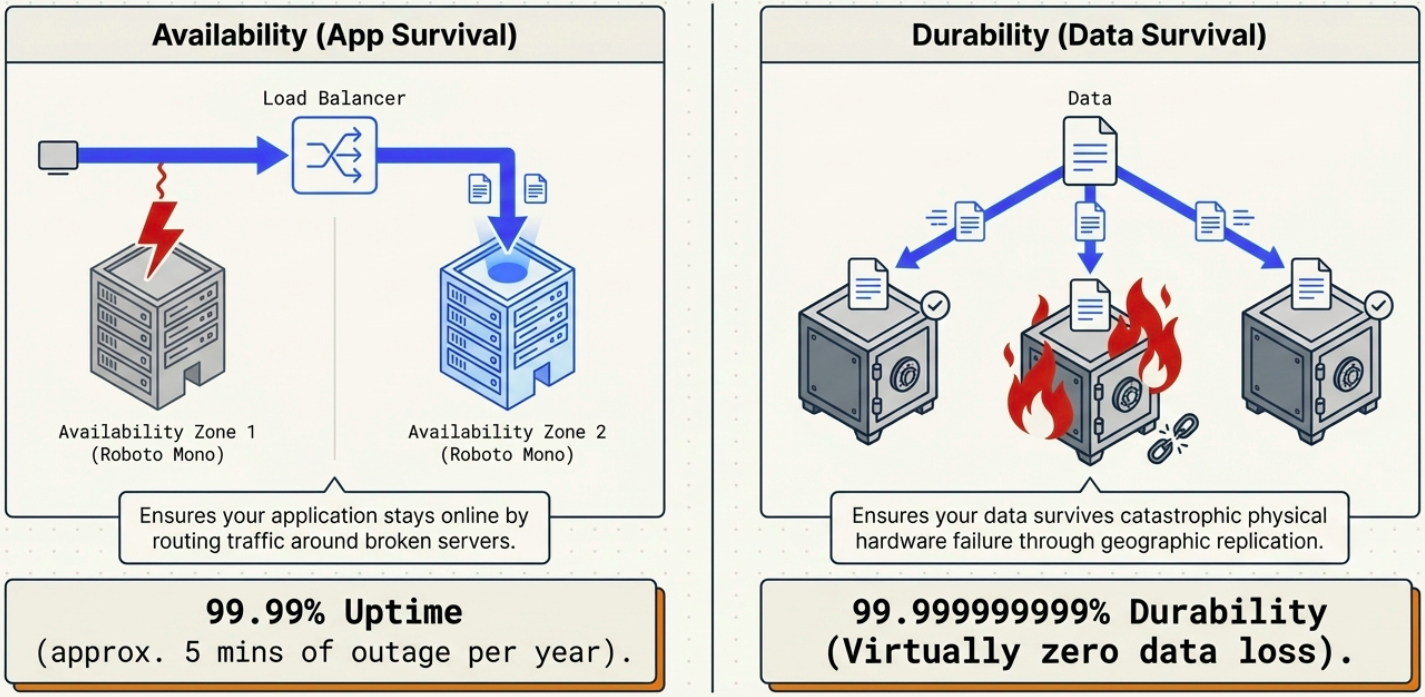

Availability vs. Durability

Section titled “Availability vs. Durability”

Availability

Section titled “Availability”- Refers to the percentage of time an application is up and running (e.g., 99.999% availability means roughly 5 minutes of downtime per year).

- Achieved through horizontal scaling and placing instances in different Availability Zones - physically separate data centers (or highly partitioned buildings) with independent power and internet lines.

Durability

Section titled “Durability”- Refers to data safety - ensuring data is never lost.

- Cloud providers automatically create multiple copies of stored data and distribute them across different machines or global data centers to ensure data is not lost even in the event of hardware failure or a natural disaster.

Infrastructure as Code (IaC)

Section titled “Infrastructure as Code (IaC)”

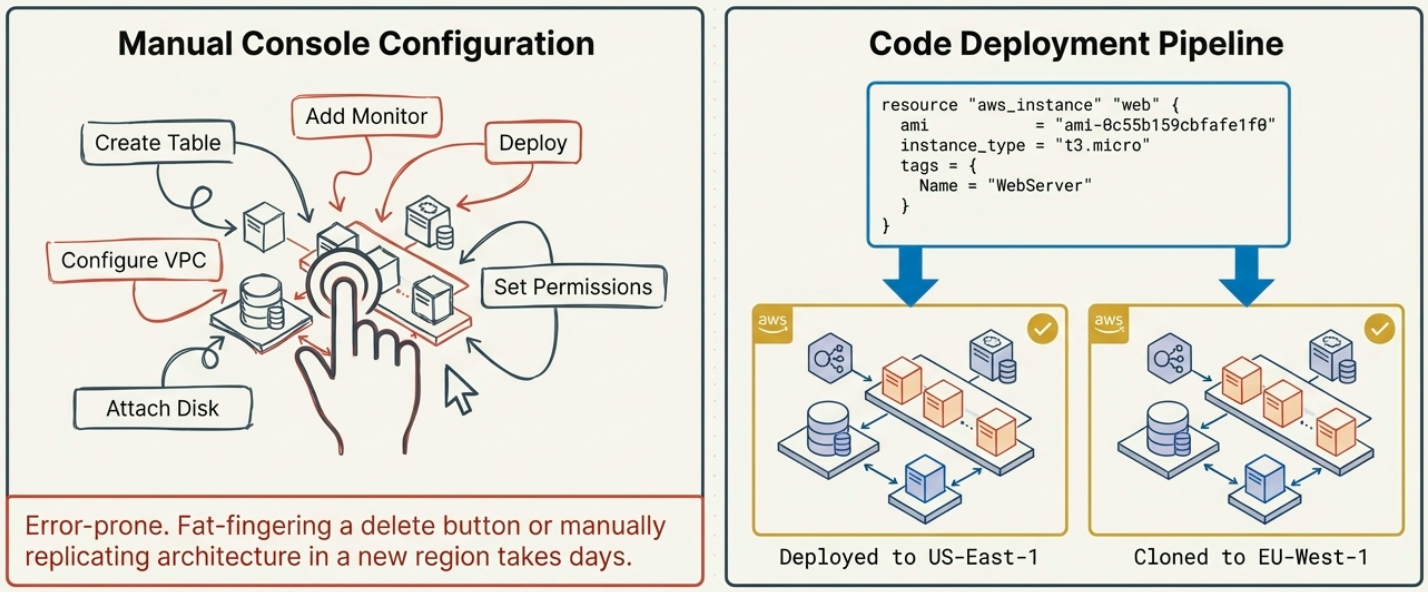

- Traditionally, engineers configured cloud resources by manually clicking through a web console, which was prone to human error and difficult to replicate across different regions.

- IaC allows users to define their infrastructure (like databases and networks) purely through code templates.

- This code can be version-controlled in Git, peer-reviewed, and easily cloned to new geographical regions.

- Common IaC Tools: AWS CloudFormation (declarative templating), AWS CDK (imperative programming with loops and conditions), and Terraform (a third-party tool compatible with multiple cloud providers like AWS, Azure, and GCP).

Cloud Networks (VPCs)

Section titled “Cloud Networks (VPCs)”

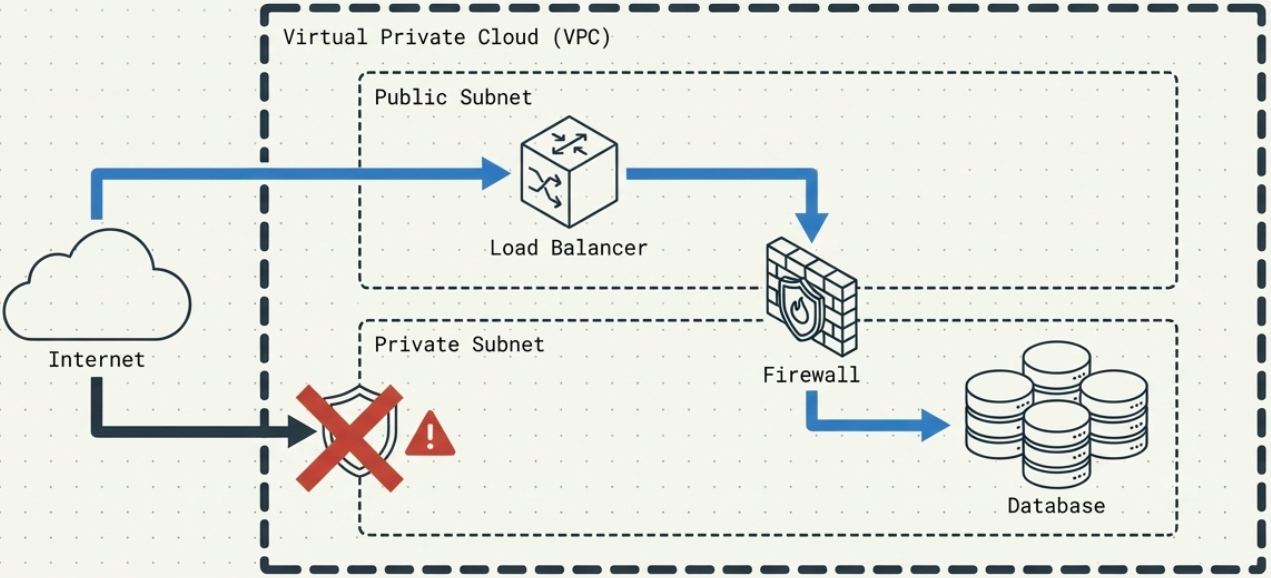

- Cloud networks allow users to isolate their resources within a broader public cloud.

- While multiple companies use the same cloud provider, their resources are walled off from each other by default.

- Users can segment their own network into subnets:

- Public subnets for web applications

- Private subnets for databases

- Apply strict security group rules to control which instances can communicate with the outside internet and with one another.