CI/CD with Cloud Providers

Cloud providers don’t just host your application - they fundamentally reshape how CI/CD pipelines are designed. When you build on cloud-native services, delivery is performed on defined services, not defined servers. Infrastructure scales dynamically; your pipeline’s job is to deliver the artifact, not to manage the infrastructure that runs it.

Creational Patterns at Cloud Scale

Section titled “Creational Patterns at Cloud Scale”The same creational design patterns covered in Pipeline Design Patterns operate at the cloud infrastructure level, not just the code level. In large-scale cloud environments, these patterns become architectural mandates that enforce consistency across dozens of teams and hundreds of services.

Code Repositories as a Singleton

Section titled “Code Repositories as a Singleton”

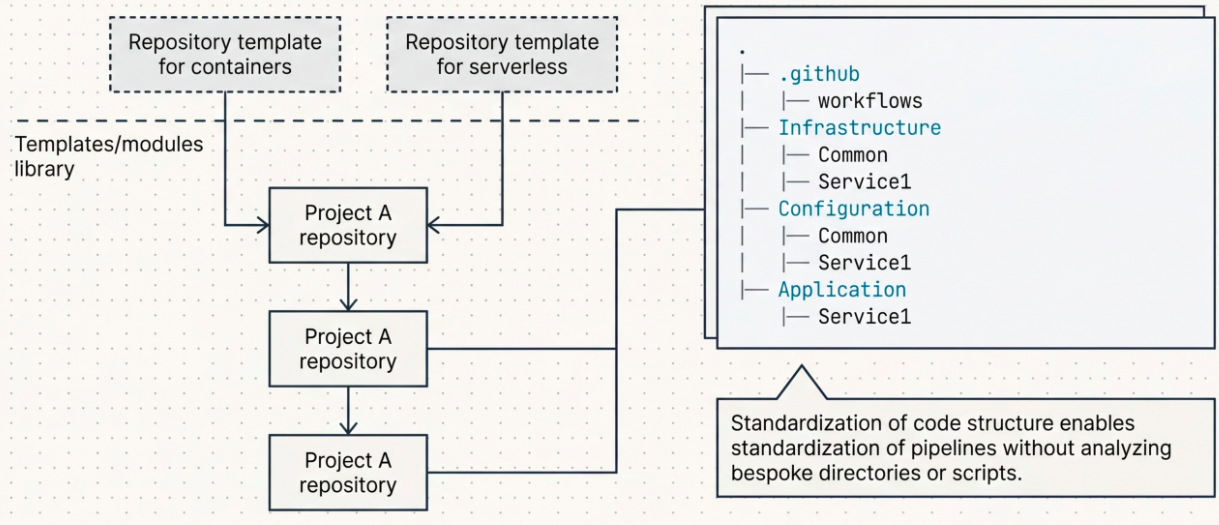

The Singleton principle - one authoritative instance, globally accessible - applies directly to how repositories and templates are structured at scale:

| Practice | How Singleton applies |

|---|---|

| Repository templates | A single canonical template repository defines the standard directory structure (Infrastructure / Configuration / Application), pre-wired scripts, and pipeline skeleton for all new projects. Teams clone it - they never build from scratch. |

| Monorepo pattern | A single repository houses multiple microservices under a standardized structure, providing one unified working model. Eliminates the coordination overhead of cross-repo changes. |

| Pipeline templates | Build, test, and deployment logic is defined once in a central module registry. When a change is needed (e.g., updating an artifact push command), it is made in one place and propagates everywhere. |

| IaC modules | A Terraform module that creates a VPC or a Kubernetes namespace is defined once and reused as a singleton across every project that needs that infrastructure component. |

Cloud-Native Architecture and CI/CD

Section titled “Cloud-Native Architecture and CI/CD”Cloud-native development builds, deploys, and maintains software using the services cloud vendors provide natively. This changes the delivery model in three fundamental ways:

| Benefit | What it means |

|---|---|

| Increased efficiency | Smaller, faster releases drive adoption of decoupled microservice and serverless architectures |

| Increased availability | Resilient, small, independent components fail in isolation - not catastrophically |

| Reduced cost | Decoupled services scale individually - you pay for what you use, not for headroom you might need |

The Four Pillars of Cloud-Native CI/CD

Section titled “The Four Pillars of Cloud-Native CI/CD”Cloud-native architecture rests on four pillars. Each one has direct implications for how pipelines must be designed:

1. Immutable Infrastructure

Section titled “1. Immutable Infrastructure”Once infrastructure is created, it is never modified. A new application version means a new server, not an updated one.

- CI/CD implication: Pipelines always build a new artifact and provision a new environment. They never SSH into a running server, run

apt upgrade, or overwrite files in place. - Trade-off: Immutability simplifies rollbacks (switch traffic back to the previous environment) but increases pipeline complexity and cost - two full environments must be maintained during a deployment.

2. Microservices

Section titled “2. Microservices”Small, independently deployable services, each responsible for a single application function.

- CI/CD implication: Each microservice has its own pipeline. Development, testing, and delivery cycles are fast precisely because the scope is small.

- Key rule: A pipeline should never update an application inside a running container - it must build an entirely new container. Immutability and microservices are inseparable in cloud-native CI/CD.

3. API-Driven Communication

Section titled “3. API-Driven Communication”APIs are the contracts between loosely coupled services. CI/CD pipelines must enforce these contracts at every testing phase:

| Test phase | What to verify |

|---|---|

| Unit tests | API code logic - does the handler process inputs correctly? |

| Contract tests | Does the implemented API exactly match the defined contract (schema, response codes, field names)? |

| Integration tests | Do API connections work end-to-end, whether connecting to real or mocked dependencies? |

| End-to-end tests | Do all services integrate correctly with minimal mocking - as close to production as possible? |

| Performance & security tests | How much traffic can the API sustain? Is it vulnerable to SQL injection, IDOR, or broken authentication? |

4. Infrastructure as Code

Section titled “4. Infrastructure as Code”IaC shifts the mental model from infrastructure at rest (static, provisioned once) to infrastructure in motion (dynamic, scaled by thresholds).

- CI/CD implication: The pipeline delivers to a service, not a server. It does not control node counts, cluster sizes, or replica sets - orchestrators do. The pipeline’s responsibility is scoped to delivering the artifact and triggering the deployment.

Cloud Provider CI/CD Toolsets

Section titled “Cloud Provider CI/CD Toolsets”Microsoft Azure - Azure DevOps (ADO)

Section titled “Microsoft Azure - Azure DevOps (ADO)”Azure DevOps is a fully independent SaaS platform - despite the name, it does not require workloads to be hosted on Azure. It integrates with any cloud platform or on-premises environment.

| Component | Purpose |

|---|---|

| Azure Boards | Project management - work items, sprints, backlogs, kanban boards |

| Azure Repos | Source code management - Git repositories with branch policies |

| Azure Pipelines | CI and CD execution - YAML-defined pipelines, multi-stage, multi-environment |

| Azure Test Plans | Manual and automated test management and execution |

| Azure Artifacts | Artifact and package registry - Java, JS, Python, .NET, and custom artifacts with fine-grained permissions |

AWS CodePipeline

Section titled “AWS CodePipeline”AWS’s CI/CD solution is a collection of discrete services, orchestrated under the CodePipeline umbrella rather than a single integrated platform.

| Component | Role | Equivalent |

|---|---|---|

| AWS CodeCommit | Source code repositories | Azure Repos / GitHub |

| AWS CodeBuild | CI / build execution | Azure Pipelines (CI stage) |

| AWS CodeDeploy | CD / deployment orchestration | Azure Pipelines (CD stage) |

| AWS CodeArtifacts | Package and artifact registry (Ruby, Rust, Swift, Java, Python) | Azure Artifacts |

Security across the AWS CI/CD stack is handled natively by IAM (access control), KMS (encryption), and CodeGuru (automated code reviews and security scanning).

Repository Strategy for Cloud Workloads

Section titled “Repository Strategy for Cloud Workloads”Repository structure decisions compound in cloud-native environments because they directly affect how pipelines are triggered, how dependencies are shared, and how teams coordinate.

Microservices on GCP (API Gateway architecture)

Section titled “Microservices on GCP (API Gateway architecture)”When Cloud Endpoints or Apigee routes requests across multiple services hosted on GKE or Cloud Run, and those services share databases or discovery infrastructure (Service Directory), a monorepo containing shared infrastructure and configuration is typically the natural fit. Cross-service changes can be tested and deployed atomically - one commit, one pipeline run, one validated artifact set.

Serverless Workloads (Cloud Functions / Cloud Run)

Section titled “Serverless Workloads (Cloud Functions / Cloud Run)”Serverless on GCP comes in two flavors with different CI/CD implications:

| Runtime | Scope | CI/CD characteristic |

|---|---|---|

| Cloud Functions | One function per trigger event - an HTTP trigger for GET, a Pub/Sub trigger for async processing, etc. | Each function is a discrete deployable unit; pipelines must simulate trigger events accurately for integration tests |

| Cloud Run | A fully containerized service that scales to zero. Accepts any HTTP traffic. | Closer to microservices in scope; benefits from container-based CI (build once, promote across environments) |

The recommended repository model for GCP serverless is hybrid:

- Polyrepo at the application level - each major domain or service has its own repository

- Monorepo within each service - bundle the function or Cloud Run service code with its required resources (Cloud Storage buckets, Pub/Sub topics, Cloud SQL schemas) using Terraform modules, so infrastructure and application code are provisioned together in a single pipeline run

Cloud Deployment Strategies

Section titled “Cloud Deployment Strategies”Deployment strategies are not cloud-exclusive, but cloud-native tooling makes them far easier to implement and automate. The choice of strategy depends on the branching model, application architecture, and deployment platform.

Blue-Green Deployment

Section titled “Blue-Green Deployment”A complete mirror of the production environment is provisioned and fully validated before any traffic is switched.

| Aspect | Detail |

|---|---|

| How it works | Deploy v2 to a standby environment identical to production (v1). Switch DNS to point to v2 when ready. |

| Rollback | Instant - switch DNS back to v1 |

| Advantages | Zero production disruption until cutover; full testing of v2 before any real traffic |

| Disadvantages | Doubles infrastructure cost; database consistency during the switch is complex; highest overhead of any deployment pattern |

Variations to reduce cost and complexity:

| Variant | How it differs |

|---|---|

| Blue-Violet | Only the components between the load balancer and database are duplicated - endpoints stay unchanged. Reduces cost but increases coordination complexity in large organizations where many teams deploy simultaneously |

| Red-Black | Combines Blue-Violet architecture with canary concepts - traffic is split by percentage between versions instead of a hard cutover, limiting blast radius if v2 has defects |

Canary Deployment

Section titled “Canary Deployment”Named after the canary-in-a-coal-mine method - one new container goes live, receives a small slice of traffic, and proves itself before the full switchover.

| Aspect | Detail |

|---|---|

| How it works | Deploy one container running v2. Route a small percentage of traffic to it. Monitor. If healthy, progressively shift remaining containers to v2. |

| Rollback options | Rollback back - restore v1 immediately; Rollback forward - ship a fast-follow fix through the pipeline without restoring v1 |

| Advantages | No architectural changes required; easy to automate; real traffic validates the change before full exposure |

| Disadvantages | The canary’s traffic sample may not be representative of peak load - the deployment can appear to succeed and then fail at scale |

Rolling Deployment

Section titled “Rolling Deployment”Traffic shifts to the new version in incremental batches over defined time intervals.

| Aspect | Detail |

|---|---|

| How it works | Define a rollout percentage (e.g., 25% of instances). Update that batch, validate, then proceed to the next batch. |

| Advantages | Many intermediate checkpoints; lower burst risk than canary; no architectural investment |

| Disadvantages | Two versions of the application run simultaneously for an extended period - managing API and schema compatibility across versions adds complexity; full completion takes significantly longer than canary |

Team Topologies

Section titled “Team Topologies”Adopting cloud-native requires more than new tooling - it requires restructuring how teams are organized. The Team Topologies framework (Skelton & Pais) is the dominant model for modern DevOps team design.

The foundation is Conway’s Law: the design of a software system will mirror the communication structures of the organization that built it. Get the team structure wrong, and the architecture follows.

The Four Team Types

Section titled “The Four Team Types”| Team type | Role |

|---|---|

| Stream-aligned | Aligned to a specific product, service, or business capability. Owns end-to-end delivery for their stream - the primary delivery team type. |

| Enabling | Helps stream-aligned teams adopt new capabilities or overcome capability gaps. Temporary, not permanent - they skill up other teams, then move on. |

| Complicated subsystem | Owns a technically complex component requiring deep specialist expertise (e.g., a video codec engine, a cryptographic library). Reduces cognitive load on stream-aligned teams. |

| Platform | Provides internal services to stream-aligned teams - the “cloud within the cloud.” Abstracts infrastructure, tooling, and pipeline scaffolding so that product teams can self-serve. |

The Three Interaction Modes

Section titled “The Three Interaction Modes”| Mode | What it looks like |

|---|---|

| X-as-a-Service | The platform team exposes capabilities through a stable API or self-service interface. Stream-aligned teams consume without needing to understand the implementation. |

| Collaboration | Two teams work together closely for a defined period to achieve a shared goal. Temporary - not a permanent dependency. |

| Facilitation | An enabling team works alongside a stream-aligned team to help them build new skills or adopt new tooling. |

The Platform Team and Landing Zones

Section titled “The Platform Team and Landing Zones”The Platform Team is responsible for the organization’s landing zone - the secure, scalable, multi-account cloud environment that all other teams deploy into.

Platform Team responsibilities:

- Manage and evolve the landing zone infrastructure

- Maintain the core CI/CD toolset (pipelines, runners, artifact registries)

- Create and own the central pipeline skeleton that stream-aligned teams extend

- Publish standardized IaC modules, container templates, and pipeline templates as internal services