The Mechanics of Containerization

What Is a Container Image?

Section titled “What Is a Container Image?”A container image is an immutable, self-contained package that bundles everything your application needs to run. Think of it as a snapshot - a blueprint that describes the state of a filesystem and the configuration needed to launch a process from it.

What Goes Inside a Container Image?

Section titled “What Goes Inside a Container Image?”A container image can include any combination of the following:

| Content Type | Description |

|---|---|

| System packages | OS-level utilities and libraries (e.g., glibc, openssl) |

| A runtime | The execution environment for your app (e.g., JVM, Node.js, Python interpreter) |

| Library dependencies | Application-level libraries your code imports |

| Source code | Your application’s source files (in interpreted languages) |

| Binaries | Pre-compiled executables |

| Static assets | HTML, CSS, images, config templates |

| Configuration | Container configuration (entrypoint, env vars, user, working dir) |

Containers vs. Container Images

Section titled “Containers vs. Container Images”| Concept | Definition |

|---|---|

| Container Image | A static, read-only blueprint stored on disk. It doesn’t “run” - it just exists. |

| Container | A live, running instance created from a container image. It only exists while there are active processes. |

- When you run a container image, the container runtime executes the program specified in the image’s entrypoint (e.g., starting the JVM for a Java app).

- A container only exists at runtime. If the process exits or is killed, the container stops and ceases to exist.

What Happens When a Container Starts?

Section titled “What Happens When a Container Starts?”Two important things happen automatically when a container is launched:

1. Private File System Seeding

Section titled “1. Private File System Seeding”The contents of the container image are used to seed a private, isolated file system for the container. Every process inside the container sees this file system - and only this file system - as if it were the entire machine.

2. Virtual Network Interface

Section titled “2. Virtual Network Interface”The container gets its own virtual network interface with a local IP address. Your application can bind to this interface and start listening on a port, enabling it to receive incoming network traffic.

Container Configuration

Section titled “Container Configuration”The configuration section of a container image tells the runtime how to turn the image into a running container. Key settings include:

Entrypoint

Section titled “Entrypoint”The entrypoint is the command executed when the container starts. For example:

- A Java app →

java -jar app.jar - A Python app →

python main.py - A compiled binary →

/usr/local/bin/myapp

Environment Variables

Section titled “Environment Variables”Used to pass runtime configuration into your application without baking it into the image. Common uses: database URLs, API keys, feature flags, log levels.

DATABASE_URL=postgres://user:pass@host/dbLOG_LEVEL=infoSpecifies which OS user the container process runs as.

Working Directory

Section titled “Working Directory”Sets the default directory from which the entrypoint command is executed (equivalent to cd /app before running your process).

Runtime Overrides

Section titled “Runtime Overrides”When starting a container, you can override the image’s default values for:

- The entrypoint command

- Arguments passed to the entrypoint

- Environment variables

This makes images reusable across different environments (dev, staging, production) without rebuilding.

The Linux Kernel Features That Make Containers Possible

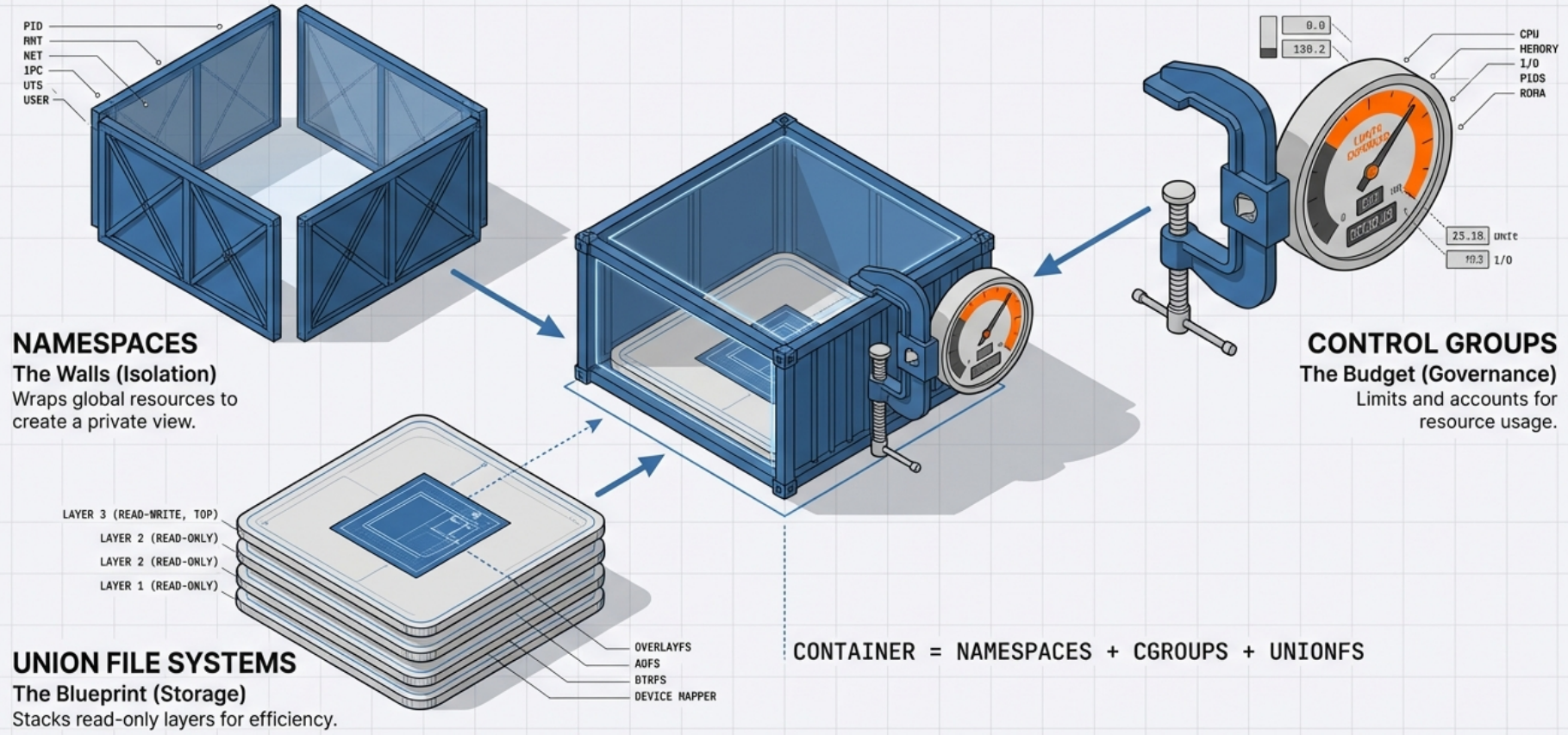

Section titled “The Linux Kernel Features That Make Containers Possible”Containers are not virtual machines. They don’t emulate hardware or run a full guest OS. Instead, they rely on three foundational Linux kernel features to provide isolation, resource control, and efficient storage.

1. Namespaces - Isolation

Section titled “1. Namespaces - Isolation”

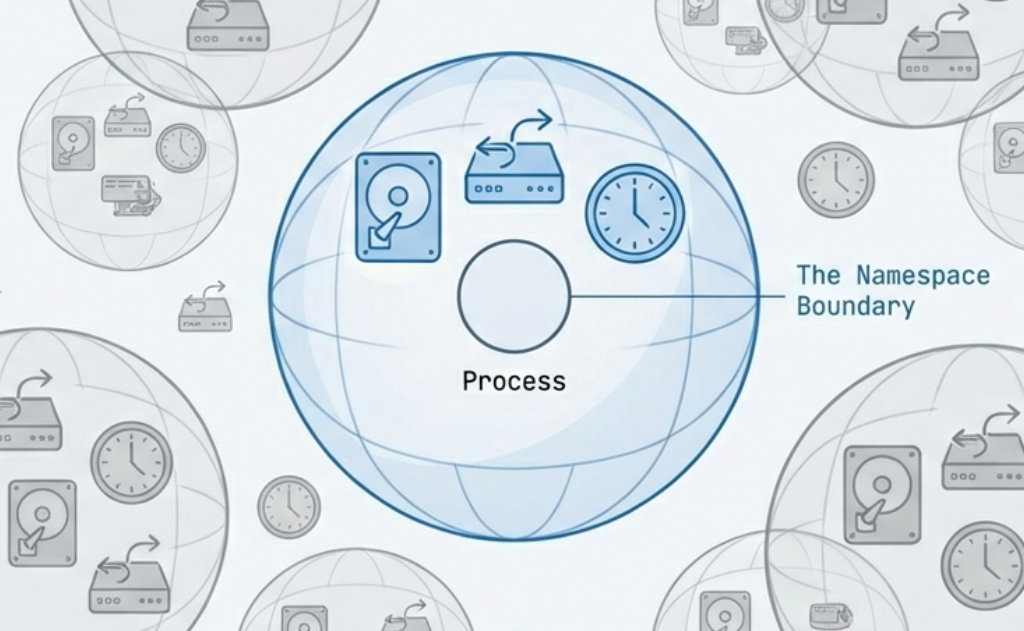

A namespace wraps a global system resource so that processes within the namespace have their own isolated view of it. From inside a namespace, a process believes it has the entire resource to itself.

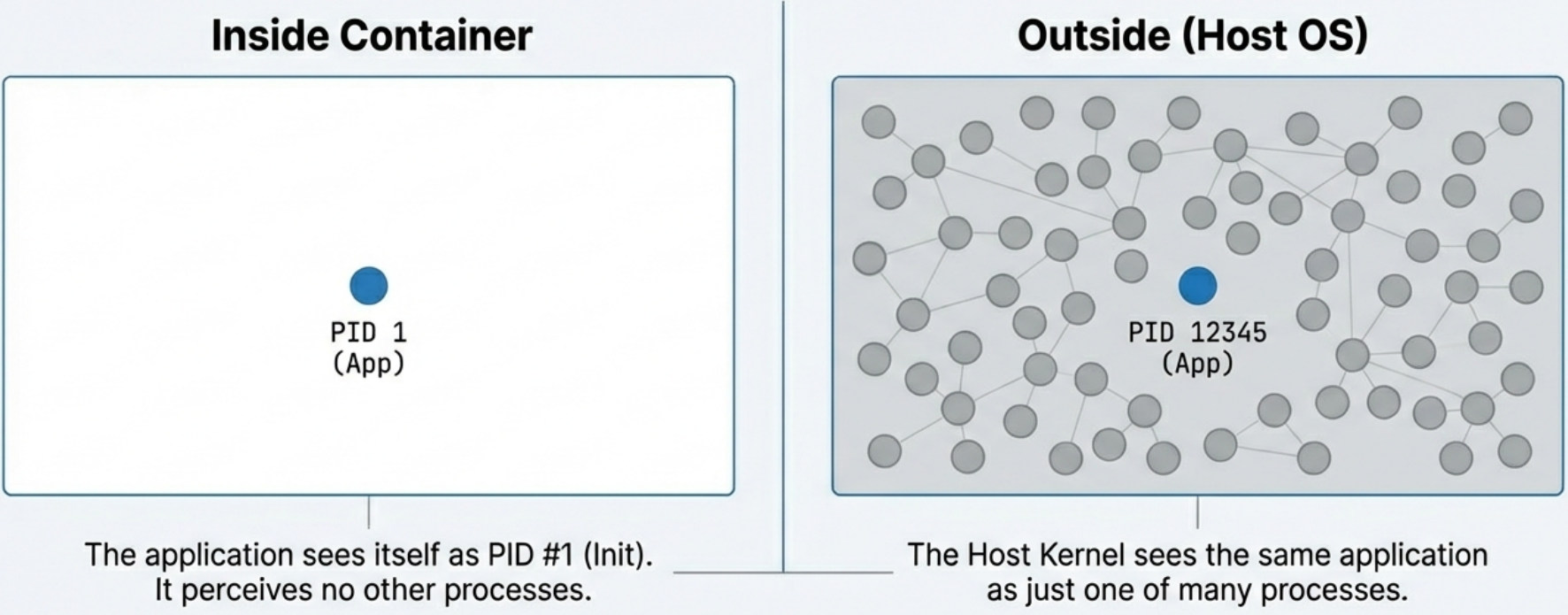

PID Namespace (Process Isolation)

Section titled “PID Namespace (Process Isolation)”

- Inside the container, your application’s main process is assigned PID 1, even if on the host machine it’s actually running as PID 12345.

- The process cannot see, signal, or interact with processes running outside its PID namespace.

- This is why running

ps auxinside a container only shows the container’s own processes.

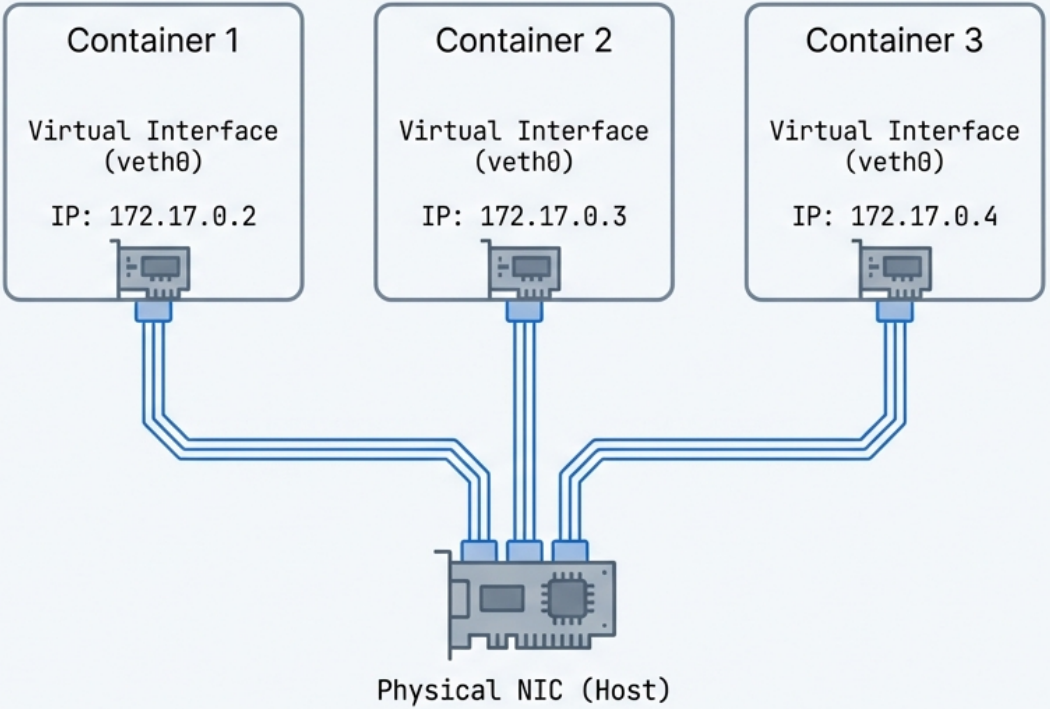

Network Namespace (Network Isolation)

Section titled “Network Namespace (Network Isolation)”

- Each container gets its own completely isolated network stack: its own IP address, routing table, firewall rules (iptables), and ports.

- Two containers can each bind to port 8080 simultaneously without conflict, because they live in different network namespaces.

- The container runtime (e.g., Docker) creates virtual ethernet pairs (

veth) to connect the container’s network namespace to the host.

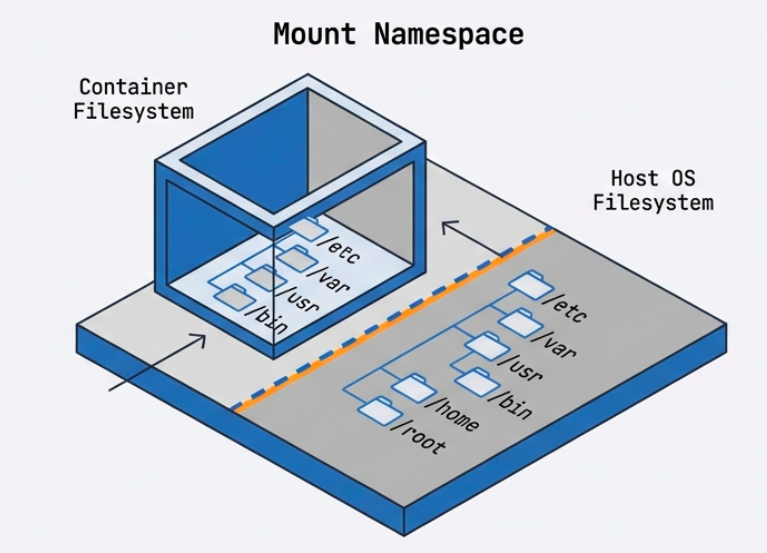

Mount Namespace (File System Isolation)

Section titled “Mount Namespace (File System Isolation)”

- Gives the container its own isolated view of the file system hierarchy.

- The container can have

/etc,/var,/home, etc. that are completely separate from the host’s file system. - Changes to the file system inside the container (in the writable layer) are invisible to the host and other containers.

UTS Namespace (Hostname Isolation)

Section titled “UTS Namespace (Hostname Isolation)”- Allows the container to have its own hostname and domain name, independent of the host.

- This is why a container can report its hostname as

web-server-1while the host machine is namedprod-node-42.

IPC Namespace (Inter-Process Communication Isolation)

Section titled “IPC Namespace (Inter-Process Communication Isolation)”- Isolates IPC resources such as System V message queues and POSIX shared memory.

- Prevents processes in one container from interfering with IPC resources used by another.

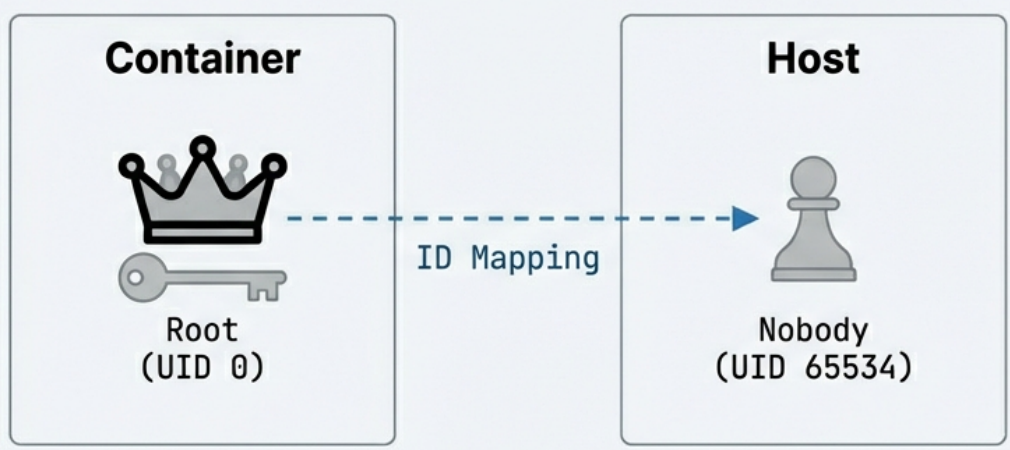

User Namespace (User Identity Isolation)

Section titled “User Namespace (User Identity Isolation)”

- Maps user IDs inside the container to different user IDs on the host.

- A process running as UID 0 (root) inside the container can be mapped to an unprivileged user (e.g., UID 65534) on the host.

- This is a critical security feature: even if a malicious process “escapes” the container, it runs as a non-privileged host user.

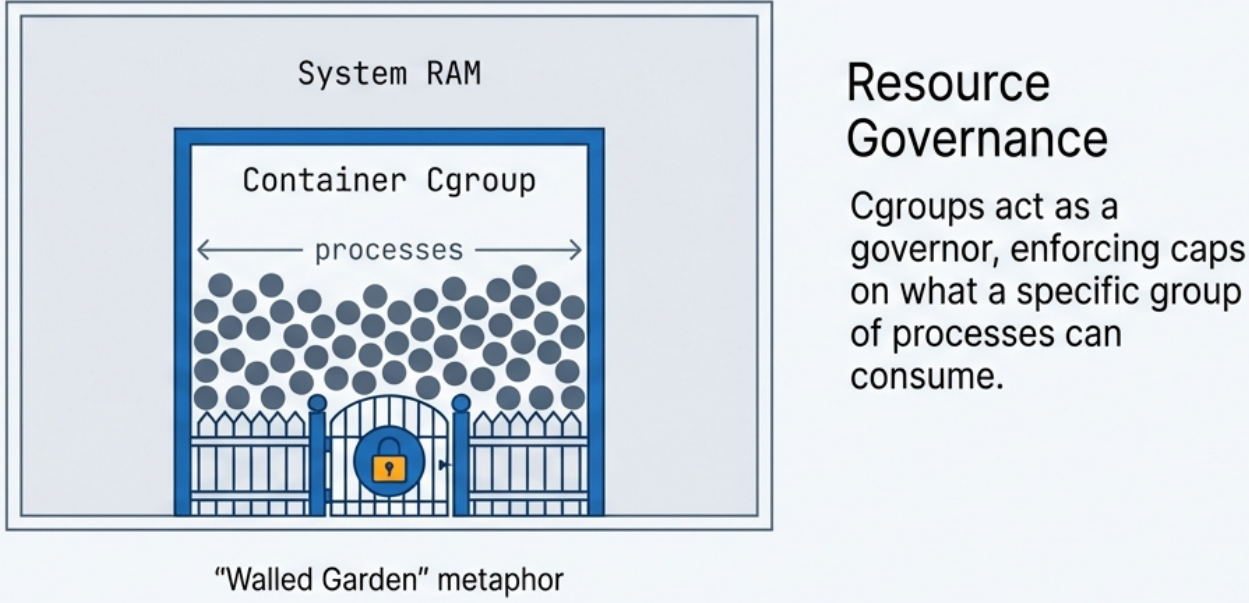

2. Control Groups (cgroups) - Resource Management

Section titled “2. Control Groups (cgroups) - Resource Management”

While namespaces provide isolation, cgroups provide resource governance. They allow the kernel to limit, account for, and isolate the resource usage of a group of processes.

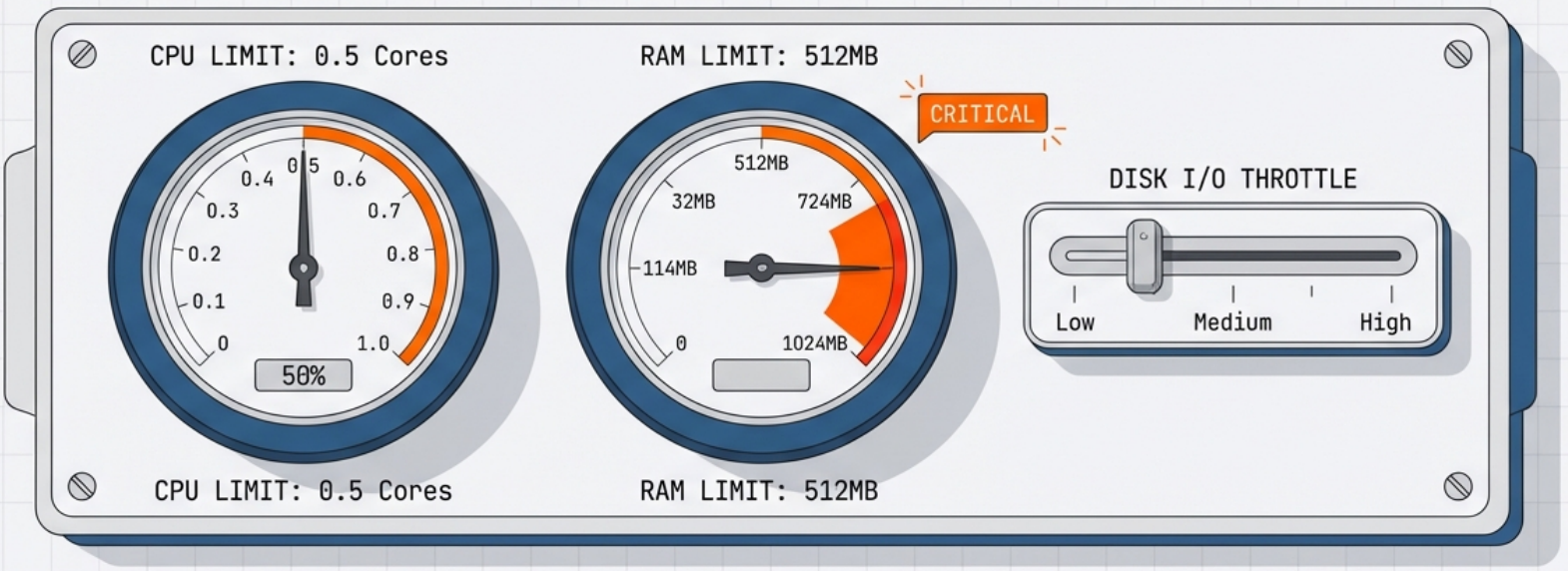

CPU Limits

Section titled “CPU Limits”- You can cap how much CPU time a container’s processes receive.

- Example: Limit a container to 0.5 CPU cores, even on a 32-core machine.

- Implemented via CPU shares, CPU quotas, and CPU periods.

Memory Limits

Section titled “Memory Limits”- Set a maximum amount of RAM a container can use.

- Example:

--memory=512mrestricts the container to 512 MB of RAM. - If the container exceeds its memory limit, the kernel’s OOM (Out-Of-Memory) killer will terminate a process in the container.

Block I/O Limits

Section titled “Block I/O Limits”- Throttle the rate at which a container can read from or write to disk.

- Prevents one container from saturating disk bandwidth.

The “Noisy Neighbor” Problem

Section titled “The “Noisy Neighbor” Problem”

Without cgroups, a single poorly-written or malicious container could consume 100% of CPU or RAM, starving every other application on the same host. cgroups enforce guaranteed resource isolation, making multi-tenant container hosting reliable.

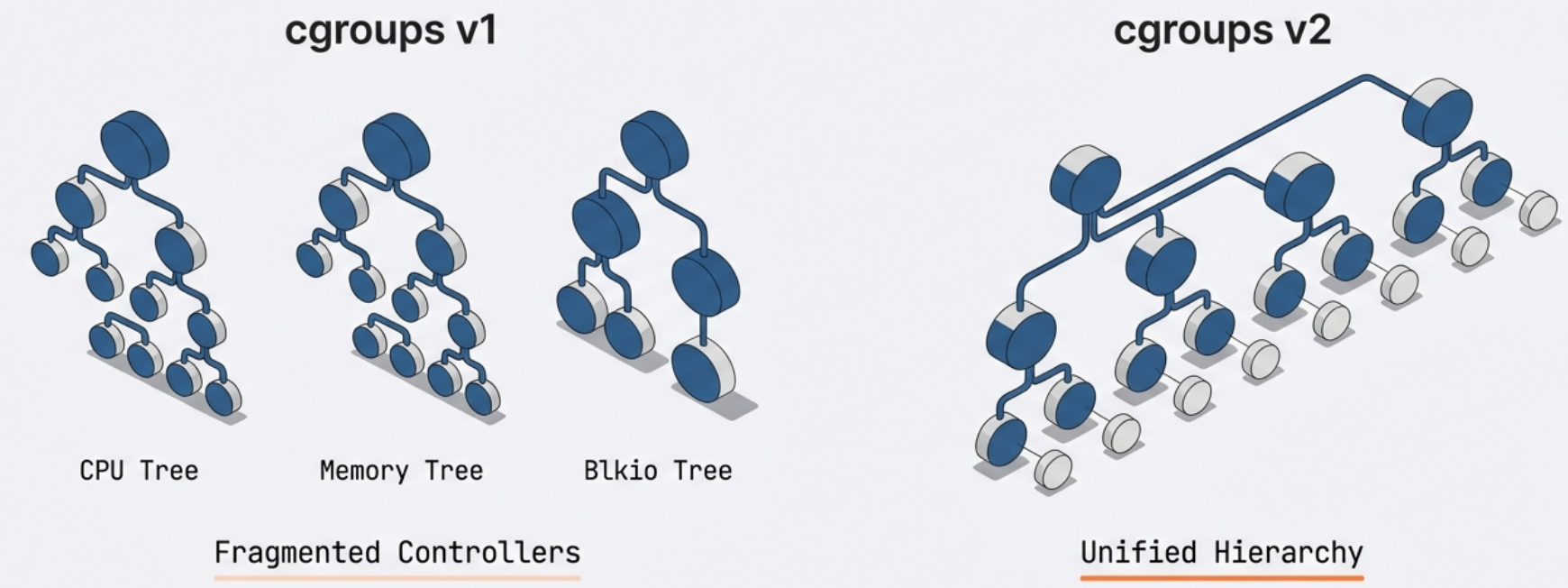

cgroups v1 vs. v2

Section titled “cgroups v1 vs. v2”

- cgroups v1: Each resource controller (cpu, memory, blkio) is managed separately.

- cgroups v2: A unified hierarchy where all controllers are managed together. More modern and consistent. Required by some newer container runtimes.

3. Union File Systems & Copy-on-Write (CoW) - Storage

Section titled “3. Union File Systems & Copy-on-Write (CoW) - Storage”

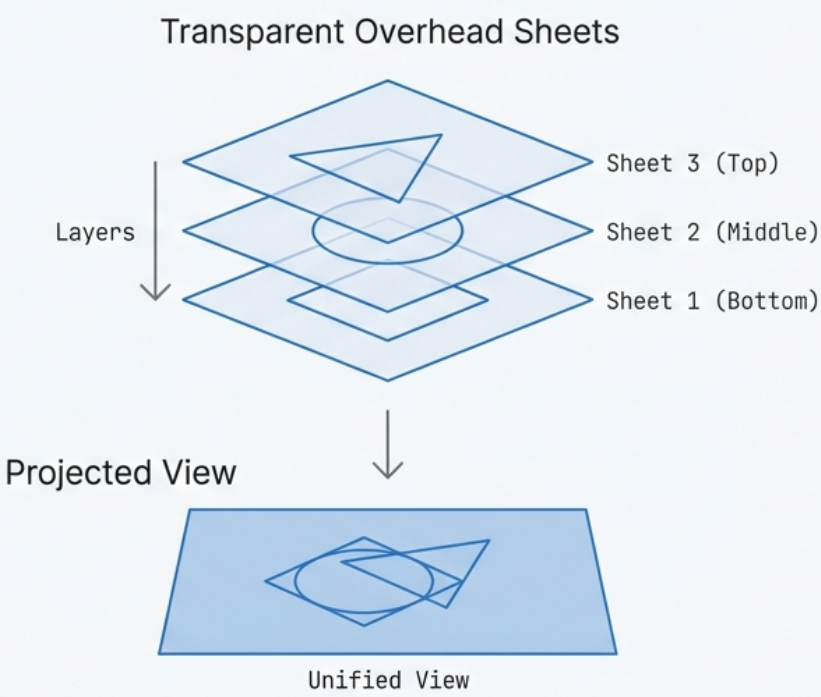

Container images are built in layers. This layered architecture is what makes images lightweight, fast to pull, and highly efficient in storage and memory usage.

Image Layers (Lower Layers - Read-Only)

Section titled “Image Layers (Lower Layers - Read-Only)”A container image is composed of multiple stacked, read-only layers. Each layer represents a set of file system changes (additions, modifications, deletions) from one build step.

Example layer stack for a Java web app:

[ Layer 4 ] → App JAR file added (top, most specific)[ Layer 3 ] → JDK installed[ Layer 2 ] → apt-get update + curl[ Layer 1 ] → Base Ubuntu 22.04 image (bottom, most general)Each layer is content-addressed - identified by a cryptographic hash of its contents. This means:

- If two images share the same base layer (e.g., Ubuntu 22.04), that layer is stored once on disk and shared in memory, even if 50 containers are running from different images.

- Pulling a new image version is fast: only the changed layers need to be downloaded.

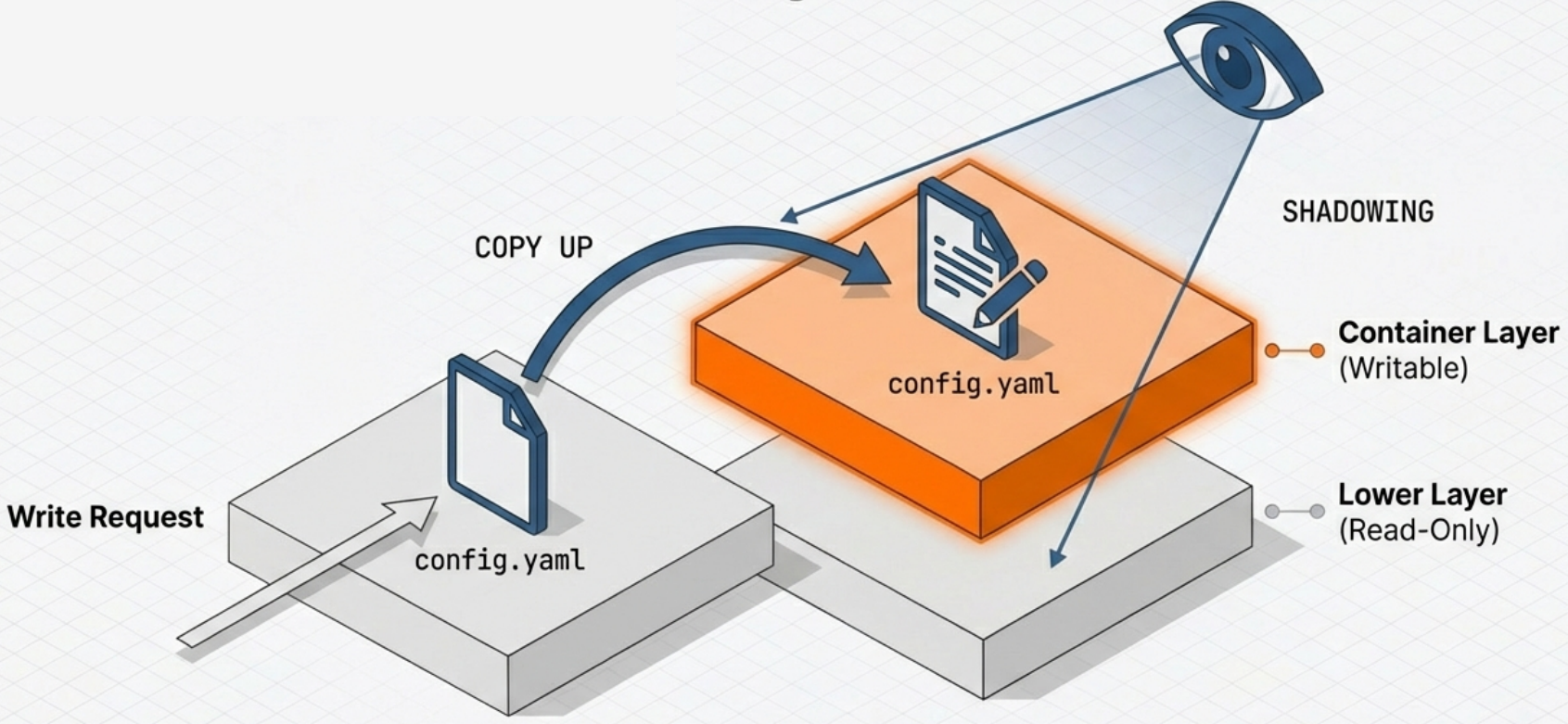

Container Layer (Upper Layer - Read-Write)

Section titled “Container Layer (Upper Layer - Read-Write)”When a container is started, a thin, ephemeral read-write layer is added on top of the read-only image layers. This is the only place where the running container can write new data.

[ Container Layer ] → Read-Write (ephemeral, destroyed when container stops)─────────────────────────────────────────────────────────────────────────────[ Layer 4 ] → Read-Only ─┐[ Layer 3 ] → Read-Only │ These are the image layers - shared and immutable[ Layer 2 ] → Read-Only │[ Layer 1 ] → Read-Only ─┘Copy-on-Write (CoW) Mechanism

Section titled “Copy-on-Write (CoW) Mechanism”

The key question is: what happens if a running container needs to modify a file that exists in a read-only layer?

The answer is Copy-on-Write:

- When a container process writes to a file that exists only in a read-only lower layer, the file system detects this.

- A copy of the file is made up into the writable container layer.

- The modification is applied to the copy in the upper layer.

- Subsequent reads of that file will see the modified version from the upper layer (it shadows the original below).

- When the container is deleted, the entire upper read-write layer is discarded - the original image layers remain perfectly intact.

This is why stopping and removing a container does not destroy the image, and why you can spin up 100 containers from the same image without 100 copies of the image on disk.

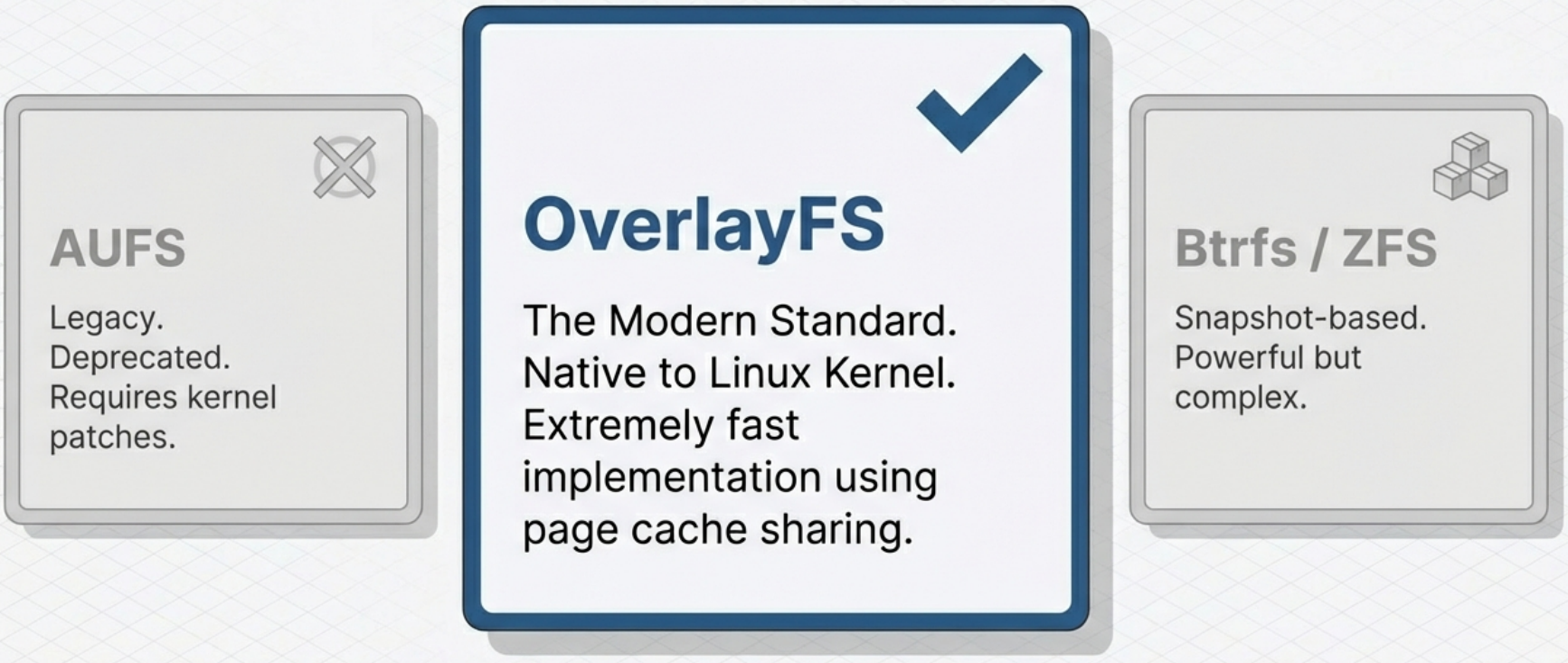

Union File System Implementations

Section titled “Union File System Implementations”

The “stacking” of layers into a single unified view is handled by a Union File System. Common implementations include:

| Implementation | Notes |

|---|---|

| OverlayFS | The default for Docker on modern Linux. Uses kernel-native overlay mounts. Very fast. |

| AUFS | An older union file system, once the Docker default. Requires a kernel patch; largely deprecated. |

| Btrfs | A copy-on-write file system at the block level. Offers snapshots. |

| ZFS | Provides strong integrity guarantees and efficient snapshots. Less common for containers. |

| Device Mapper | Block-level thin provisioning. Used in older RHEL/CentOS environments. |

Putting It All Together: The Full Container Lifecycle

Section titled “Putting It All Together: The Full Container Lifecycle”1. Build → Dockerfile instructions create stacked read-only image layers each layer hashed and stored

2. Push/Pull → Only missing layers are transferred over the network shared layers are reused from local cache

3. Start → Runtime creates: • New set of Namespaces (pid, net, mnt, uts, ipc, user) • New cgroup for the container's processes • A writable CoW layer on top of the image layers • A virtual network interface with an IP address

4. Running → Process runs in isolation sees its own PID 1, its own /etc, its own IP cgroups enforce CPU/memory limits writes go to the ephemeral upper layer

5. Stop/Delete → Running processes are terminated The ephemeral read-write layer is discarded Image layers remain untouched Namespaces and cgroups are torn down