CI/CD Maturity & Adoption

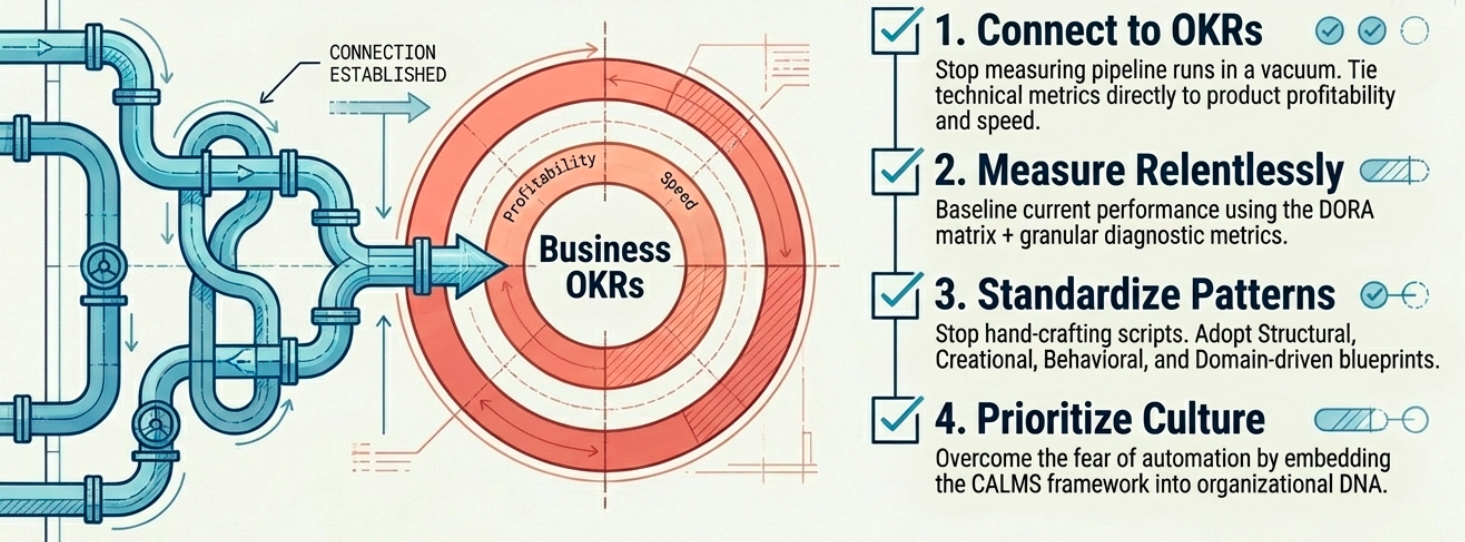

Building a CI/CD pipeline is relatively straightforward. Getting an entire organization to adopt it consistently, reliably, and at scale is something else entirely. CI/CD maturity is not a technical problem - it is an organizational one.

The North Star for CI/CD Design Patterns

Section titled “The North Star for CI/CD Design Patterns”Continuous Integration is well-standardized. Continuous Delivery and infrastructure practices like GitOps, however, are still evolving with limited industry consensus.

The “North Star” is a set of four evaluation criteria that organizations can use to assess whether a CI/CD design pattern is ready to deploy and suitable for their context:

| Characteristic | What it evaluates |

|---|---|

| Productivity | How well the pattern leverages reusability, abstraction, maintainability, and flexibility. Includes DORA metrics alignment. |

| Transparency, Traceability, and Accountability | Whether the pattern creates a clear audit trail and reduces administrative overhead in the supply chain. |

| Interoperability | The ability to apply the pattern across a broader product portfolio with minimal manual intervention. |

| Discovery (New Methods) | The ease of injecting new capabilities like AI-enabled tooling, compliance checks, and advanced security measures. |

The CI/CD Design Pattern Catalog

Section titled “The CI/CD Design Pattern Catalog”To connect technical CI/CD decisions to business outcomes, organizations can use four foundational design pattern categories. Together, they form a baseline catalog that enables purposeful, scalable software delivery.

| Pattern Category | Focus | What it enables |

|---|---|---|

| Structural | Productivity | Well-defined, well-documented pipeline and infrastructure components that integrate predictably. The most fundamental pattern - the foundation everything else builds on. |

| Creational | Transparency + Accountability | Step-by-step implementation of existing capabilities (e.g., cloud-native CI/CD tools). Reduces complexity by clearly defining roles for managing IaC and pipeline components. |

| Behavioral | Interoperability | Event-driven patterns for communication and interaction within and across pipelines. Relies on well-defined interfaces for high traceability across distributed systems. |

| Domain-Driven | Security + Compliance | Tailor-made patterns for highly regulated industries (aviation, defense, finance). Injects domain-specific security controls and compliance gates that generic patterns don’t address. |

Core Architectural Challenges

Section titled “Core Architectural Challenges”Despite CI/CD being a well-understood discipline, practitioners consistently encounter the same architectural bottlenecks when scaling:

| Challenge | Root cause | Impact |

|---|---|---|

| Infrastructure delivery | Generic CI/CD tools are optimized for application code, not infrastructure provisioning | Teams resort to ad-hoc scripts; environments drift; IaC integration is fragile |

| GitOps adoption | GitOps shifts the delivery model from push to pull, requiring a complete mental model change for how triggers work | Teams implement push-based pipelines with GitOps tooling, breaking the pull-based contract |

| Scale | Almost always caused by poor initial pipeline design - no modularity, no caching, no stage isolation | Pipelines become monolithic and slow; adding a new service requires forking the entire configuration |

| Pipeline security | Organizations run security tools in their pipelines but rarely think about securing the pipeline itself | CI/CD infrastructure becomes an attack vector; supply chain vulnerabilities go undetected |

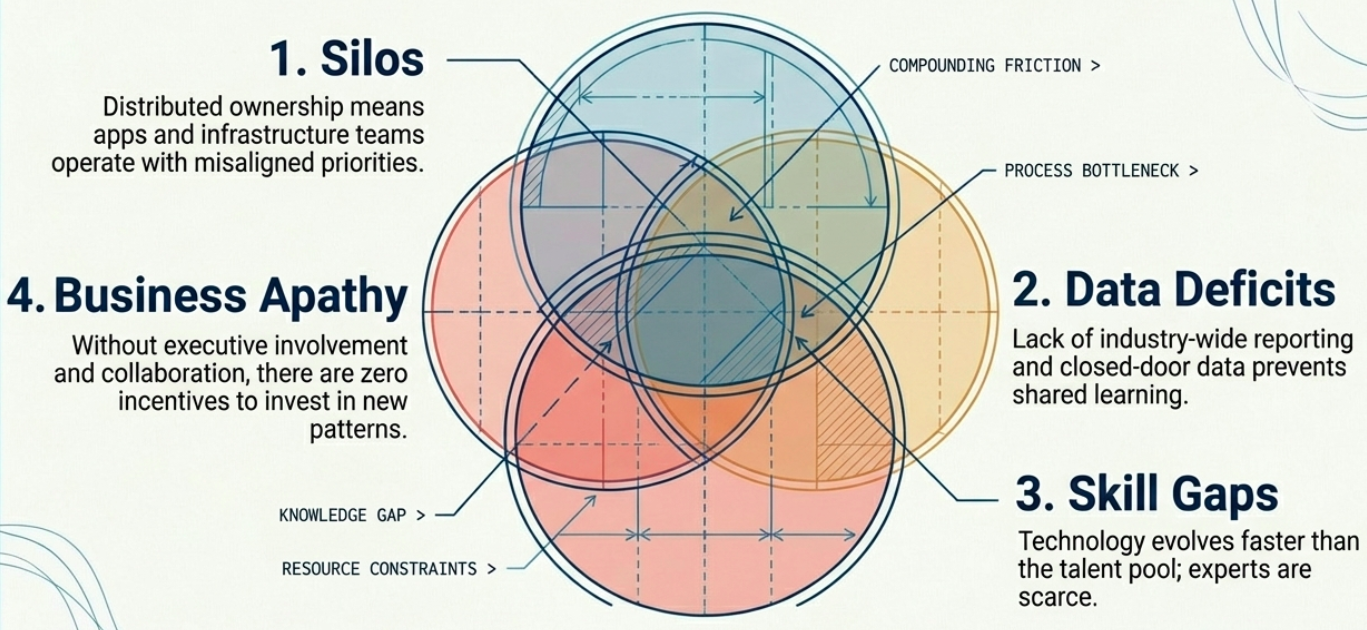

Scaling Adoption: Systemic Barriers

Section titled “Scaling Adoption: Systemic Barriers”

Scaling CI/CD design patterns across an organization requires removing deeply embedded structural problems first:

| Barrier | What it looks like |

|---|---|

| Lack of quality data | Limited industry survey data on CI/CD adoption means teams are guessing at “normal.” Problems that are common feel unique and unsolvable. |

| Silos and distributed ownership | Infrastructure owned by one team, applications owned by another, pipelines owned by a third. No single team has visibility or authority over the full delivery chain. |

| Expertise gaps | Scaling adoption requires engineers who understand both the application domain and CI/CD tooling deeply. This skillset is rare and in high demand. |

| Weak business alignment | Without business stakeholders invested in CI/CD standardization, there is no organizational incentive to maintain a shared pattern catalog. |

Cultural Resistance to Change

Section titled “Cultural Resistance to Change”

The principles of CI/CD are inseparable from the cultural principles of DevOps - particularly the CALMS framework (Culture, Automation, Lean, Measurements, Sharing). The most persistent CI/CD adoption barrier is cultural, not technical.

Resistance typically appears in two forms:

The “Lift and Shift” Mentality

Section titled “The “Lift and Shift” Mentality”Teams port their existing scripts and manual processes directly into new CI/CD tooling without rethinking the underlying workflow. The tool changes; the logic doesn’t. The result is a slow, fragile pipeline wrapped in a modern YAML file.

“We always did it this way” is the most expensive sentence in a technology organization.

Fear of Automation

Section titled “Fear of Automation”| Who | The fear | The real consequence |

|---|---|---|

| Engineers | ”Full automation will replace me.” | Resistance to writing robust pipelines; manual steps are left in as job security. |

| Management | ”We can’t trust automated decisions with production.” | Approval gates are inserted unnecessarily, destroying the value of continuous deployment. |

Assessments and Audits

Section titled “Assessments and Audits”Improving a CI/CD pipeline isn’t purely a technical exercise - it requires a structured, ongoing process of measuring where you are and validating what you’ve built. Assessments and audits serve those two distinct purposes.

| Assessment | Audit | |

|---|---|---|

| Definition | Surveys, questionnaires, and observational tools that produce actionable guidance and recommendations | A systematic, independent, documented process for obtaining objective evidence (ISO definition) |

| Scope | Can range from broad portfolio reviews to narrow financial or performance evaluations | Evaluates security/compliance requirements, organizational guidelines, and evidence of policy adherence |

| Who runs it | Internal teams, platform engineers, or vendor-provided complementary services | Internal compliance teams or external third-party organizations |

| Output | Recommendations to optimize efficiency and resiliency | Formal findings, corrective action items, and compliance attestation |

| Execution | Tool-driven: surveys, discovery software, DORA metrics | Manual or technology-driven; internal or third-party |

Common CI/CD assessment types:

- Performance assessment

- Risk assessment

- CI/CD portfolio assessment

- Financial assessment (licensing, rightsizing, infrastructure optimization)

- Prescriptive assessment

Conducting Assessments

Section titled “Conducting Assessments”The 5-Step Assessment Workflow

Section titled “The 5-Step Assessment Workflow”Every comprehensive assessment follows this structured process regardless of type:

| Step | What happens |

|---|---|

| 1. Define objectives | Establish the key goals and desired outcomes - this determines the assessment type (performance, portfolio, financial, etc.) |

| 2. Plan | Define frequency, duration, and the specific tools that will collect the data |

| 3. Collect data | Execute the assessment - gather data through the planned tools and methods |

| 4. Analyze data | Interpret findings, from simple pattern recognition to complex financial modeling (licensing recommendations, rightsizing, infrastructure cost optimization) |

| 5. Recommend | Produce actionable recommendations and prioritized insights tied back to the original objectives |

Assessment Tools

Section titled “Assessment Tools”| Tool / Technique | Purpose |

|---|---|

| DORA Metrics | Google Cloud’s four-metric framework for measuring velocity (deployment frequency, lead time) and stability (change failure rate, MTTR) |

| Gamification | Tier-based scoring system to motivate improvement in team behaviors and pipeline processes |

| Txture | Infrastructure mapping and multi-dimensional assessment |

| Matilda | Agentless hardware and application topology discovery |

| Apache DevLake | Ingests and visualizes fragmented data across DevOps tools to surface cross-tool insights |

Common Assessment Challenges

Section titled “Common Assessment Challenges”| Challenge | What it looks like |

|---|---|

| Over-standardization | Common implementations become too rigid, reducing team flexibility and slowing delivery |

| Ivory tower teams | Assessment findings are dictated top-down rather than being guided by the practitioners who build and run the pipelines |

| Documentation overhead | Sustaining pattern libraries and audit templates introduces administrative cost that must be budgeted for |

| Linear progression constraints | Traditional maturity models assume sequential advancement - real teams skip, regress, and parallelize across phases |

The Auditing Process

Section titled “The Auditing Process”Audits are a formal process to identify and close gaps in a CI/CD system’s security, compliance, and policy posture. Because auditability depends on traceability, the pipeline must produce artifacts - logs, provenance records, signed commits, SBOM outputs - that auditors can inspect.

Four Phases of an Audit

Section titled “Four Phases of an Audit”| Phase | What happens |

|---|---|

| 1. Planning | Auditors gain system understanding by examining traceability tools, available evidence artifacts, and policy definitions |

| 2. Developing findings | Active investigation: testing, vulnerability assessments, data analysis. Audit tools and filters process and correlate the gathered evidence |

| 3. Reporting | Findings are compiled into formal reports and presented to stakeholders |

| 4. Follow-up | Corrective actions are evaluated. Tracks how many identified gaps have been successfully resolved and by when |

Shifting Audits Left

Section titled “Shifting Audits Left”Traditionally, audits are post-implementation activities - conducted before a compliance deadline or after a security incident. The modern approach embeds audit controls into the pipeline from the first commit:

| Shift-left benefit | What it enables |

|---|---|

| Proactive issue detection | Inconsistencies surface during development, not weeks later during a formal review |

| Automated compliance | Compliance checks run automatically in the pipeline - no manual intervention required |

| Real-time visibility | Audit trails and telemetry provide a live view of the delivery process, making it trivial to produce evidence during external audits |

| Standardization | Templates, checklists, and automated guidelines enforce best practices from day one - not after the fact |

| Agile alignment | Embedded audit controls complement rapid iteration; teams get fast feedback and can act immediately |

Audit Checklists

Section titled “Audit Checklists”Integrating a standard audit checklist into CI/CD pipelines increases traceability, improves auditability, and reduces end-of-cycle compliance scrambles. Checklists divide into two categories:

General Audit Points

Section titled “General Audit Points”Foundational checks that reduce general pipeline risk and streamline implementation:

| Area | What to verify |

|---|---|

| Source code management | All changes tracked in VCS; branch management and code review processes established |

| Build automation | Builds are reproducible and fully automated; build scripts are versioned; failures addressed promptly |

| Continuous integration | Automated unit, integration, and regression tests run on every commit; code quality gates enforced |

| Continuous delivery | Staging and production deployments are automated; deployment scripts versioned; rollback mechanisms tested |

| Security | Security tooling integrated into the pipeline; secrets managed securely; SBOMs produced; access controls on CI/CD infrastructure |

| Monitoring and logging | Build/deployment logs retained; pipeline health monitored; alerts configured for critical failures |

| Compliance and documentation | Industry standards adhered to; documentation kept current; complete audit trails maintained |

Specific Audit Points

Section titled “Specific Audit Points”Deeper optimizations targeting specific risks within the CI/CD ecosystem:

| Area | What to audit |

|---|---|

| Review controls | Version control flow, pipeline RBAC, credential management, branch and release protection rules |

| Artifact management | Code signing, artifact verification, and configuration drift monitoring |

| Identity management | Inventory of local, external, and shared identities; stale identity catalog |

| Identity cleanup | Active removal of unnecessary roles, permissions, and identities |

| Tool inventory | Software supply chain dependencies, licensing status, and total toolchain rationalization |

| Automation workflow | Runtime performance of build, test, and deployment automation |

| Risk assessment | Pipeline attack vectors; security flaws in the software stack |

| Third-party services | Full visibility into complex external integrations (e.g., hosted SaaS pipeline tools) |

Quality Gates

Section titled “Quality Gates”Quality gates are the automated enforcement mechanism that operationalizes audit checklists inside the pipeline. They block a pipeline stage from proceeding if defined criteria aren’t met - making compliance a binary, continuous check rather than a periodic review.

7-Step Quality Gate Implementation

Section titled “7-Step Quality Gate Implementation”| Step | Action |

|---|---|

| 1. Define quality criteria | Determine what must be enforced: performance benchmarks, compliance requirements, security standards, code coverage thresholds |

| 2. Select tools | Match tools to criteria - SonarQube (static analysis), Snyk (dependency security), JMeter (performance), OWASP ZAP (DAST) |

| 3. Integrate into pipeline | Connect tools via the APIs or plugins provided by the CI/CD platform (Jenkins, GitHub Actions, GitLab CI) |

| 4. Configure gates | Set specific thresholds - e.g., “fail build if critical vulnerabilities detected” or “fail if coverage < 80%“ |

| 5. Automate triggers | Set gates to trigger at strategic points: on PR creation, on commit to main, pre-deployment |

| 6. Monitor results | Continuously track outcomes; assign and track remediation of flagged issues |

| 7. Iterate | Review and update thresholds regularly. Quality criteria must evolve as standards and requirements change |

CI/CD Maturity Model

Section titled “CI/CD Maturity Model”The maturity model maps where an organization currently stands in its CI/CD design pattern adoption and clarifies the next step required. Advancing through the model removes inconsistencies, improves effectiveness, and eliminates the overhead of maintaining multiple divergent CI/CD postures across teams.

Five Phases of Maturity

Section titled “Five Phases of Maturity”| Phase | What it looks like |

|---|---|

| 1. Inconsistent | No unified design patterns exist. Each team implements CI/CD independently. No shared vocabulary, no shared templates, no shared standards. |

| 2. Static | Common patterns are recognized and documented - checklists, guidelines, wikis. A shared vocabulary begins to form. Static resources are a great starting point but become obsolete without active maintenance. |

| 3. Automated | Manual guidelines can’t scale. Teams begin automating pattern generation and enforcement. Requirements can be fed into the system to automatically produce compliant pipeline scaffolding. |

| 4. Dynamic | Patterns actively evolve. Observability tools assess, recommend, and drive improvement. Checklists are extracted dynamically; developers pull from pattern libraries, code snippets, and working examples. |

| 5. Self-managed | The organization maintains a self-managed pattern inventory. Multiple teams pull from the same library seamlessly. The library evolves based on feedback loops, not manual curation cycles. |

Maturity Challenges

Section titled “Maturity Challenges”| Challenge | What it looks like |

|---|---|

| Over-standardization | Patterns become so rigid that they slow delivery rather than accelerate it. Flexibility must be preserved. |

| Ivory tower governance | Pattern evolution is dictated by a central team rather than guided by practitioners. Federated ownership is the antidote. |

| Documentation overhead | Dynamic pattern libraries require active maintenance. This overhead is real - budget for it or it will accumulate as debt. |

| Linear progression constraints | Teams feel pressure to complete Phase N before starting Phase N+1. This mindset blocks pragmatic improvement. |

Future Trends

Section titled “Future Trends”| Trend | What to expect |

|---|---|

| AI-powered assessment | Generative AI will accelerate the creation of audit surveys, questionnaires, and checklists - reducing weeks of manual preparation to hours |

| AI feature auditability | As pipelines deliver AI-powered applications, CI/CD audits must evolve to assess the trustworthiness and accountability of AI workflows - model provenance, training data lineage, bias validation |

| Continuous compliance | Compliance posture shifts from periodic audits to a continuously monitored, pipeline-enforced state - audit evidence is generated automatically, not assembled under deadline |